Then, through optimizing GPT-2 for generative capabilities, we achieve top-level classification performance in many settings, providing further evidence for analysis by synthesis. In our work, we first show that better generative models achieve stronger classification performance.

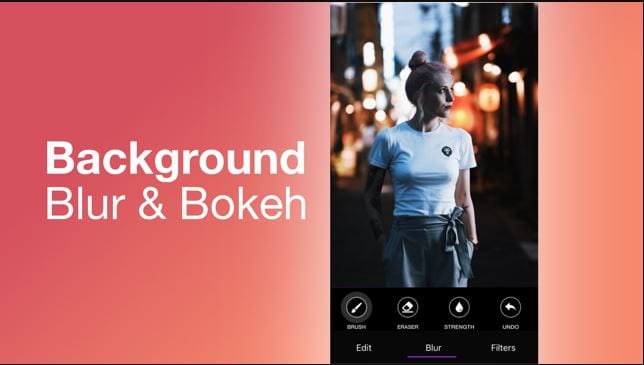

Many early generative models were motivated by this idea, and more recently, BigBiGAN was an example which produced encouraging samples and features. Once it learns to do so, an idea known as “Analysis by Synthesis” suggests that the model will also know about object categories. In contrast, sequences of pixels do not clearly contain labels for the images they belong to.Įven without this explicit supervision, there is still a reason why GPT-2 on images might work: a sufficiently large transformer trained on next pixel prediction might eventually learn to generate diverse samples with clearly recognizable objects. One possible reason for this success is that instances of downstream language tasks appear naturally in text: questions are often followed by answers (which could help with question-answering) and passages are often followed by summaries (which could help with summarization). In language, unsupervised learning algorithms that rely on word prediction (like GPT-2 and BERT) have been extremely successful, achieving top performance on a wide array of language tasks. However, our results suggest that when faced with a new domain where the correct model priors are unknown, a large GPT-2 can learn excellent features without the need for domain-specific architectural design choices. As a consequence, we require significantly more compute in order to produce features competitive with those from top unsupervised convolutional nets. To highlight the potential of generative sequence modeling as a general purpose unsupervised learning algorithm, we deliberately use the same transformer architecture as GPT-2 in language. On JFT (300M images with 18K classes), achieved a result of We only show ImageNet linear probe accuracy for iGPT-XL since otherĮxperiments did not finish before we needed to transition to different Logistic regression on learned features (linear probe) As further proof, features from the model achieve state-of-the-art performance on a number of classification datasets and near state-of-the-art unsupervised accuracy on ImageNet. This is evidenced by the diverse range of coherent image samples it generates, even without the guidance of human provided labels. When we train GPT-2 on images unrolled into long sequences of pixels, which we call iGPT, we find that the model appears to understand 2-D image characteristics such as object appearance and category. Transformer models like BERT and GPT-2 are domain agnostic, meaning that they can be directly applied to 1-D sequences of any form. Our work aims to understand and bridge this gap. However, the same broad class of models has not been successful in producing strong features for image classification. Recently, it has seen incredible success in language, as transformer models like BERT, GPT-2, RoBERTa, T5, and other variants have achieved top performance on a wide array of language tasks. Instead, take raw photos and add in filters after-the-fact to ensure the best quality and avoid a pixelated image.Unsupervised and self-supervised learning, or learning without human-labeled data, is a longstanding challenge of machine learning. These can blur your photo and can’t be undone. Regardless of the brand or price of your lens, make sure you take care to clean your lens before shooting to remove any dirt, fingerprints, moisture, etc which can make pictures look blurry. Īlways take initial photos without any kind of filter, whether on the lens or through a smartphone app. They also don’t provide the level of focus you get with a more expensive lens.

Using manual focus gives you more control and can prevent blur.Ī cheap lens is more likely to get scratched and cause your photos to look blurry.

Try and take photos in good lighting, or turn on your camera’s flash.Īuto-focus can focus on the wrong subject or struggle to find a moving subject. Low lighting can result in a blurred image. If a tripod isn’t practical, there are plenty of handheld devices you can hook your camera or smartphone to that will stabilize your shots. If a tripod is practical for the situation, there’s no reason not to use one! Even if your subject is moving, having the camera perfectly still will help immensely in controlling blur. For example, a 60mm lens needs a shutter speed of at least 1/60 to keep photos from being blurry. The longer your lens, the faster your shutter speed should be. Here are some photography Dos and Don’ts to ensure you get crystal-clear photos every time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed